Journal: Journal of Intelligent & Fuzzy Systems, vol. Keywords: Ensemble learning, stacking, cross entropy, gradient My own problem however, does not rely on images, but on a 17 dimensional vector of continuous values. As a base, I went on from pytorchs VAE example considering the MNIST dataset. It outperforms all three base classifiers, several state-of-the-art stacking algorithms, and some other representative ensemble learning methods on average. I’m currently trying to implement a VAE for dimensionality reduction purposes. Experiments with 22 data sets from the UCI machine learning repository show that the proposed stacking approach performs well. It is very likely that our treatment is a better choice for finding suitable weights.

There is no connection between different models. Multiple models apply to those sub-instances and each for a class label. In these methods each meta instance is divided into a set of sub-instances. One major characteristic of our method is its treatment of each meta instance as a whole with one optimization model, which is different from some other stacking methods such as stacking with multi-response linear regression and stacking with multi-response model trees.

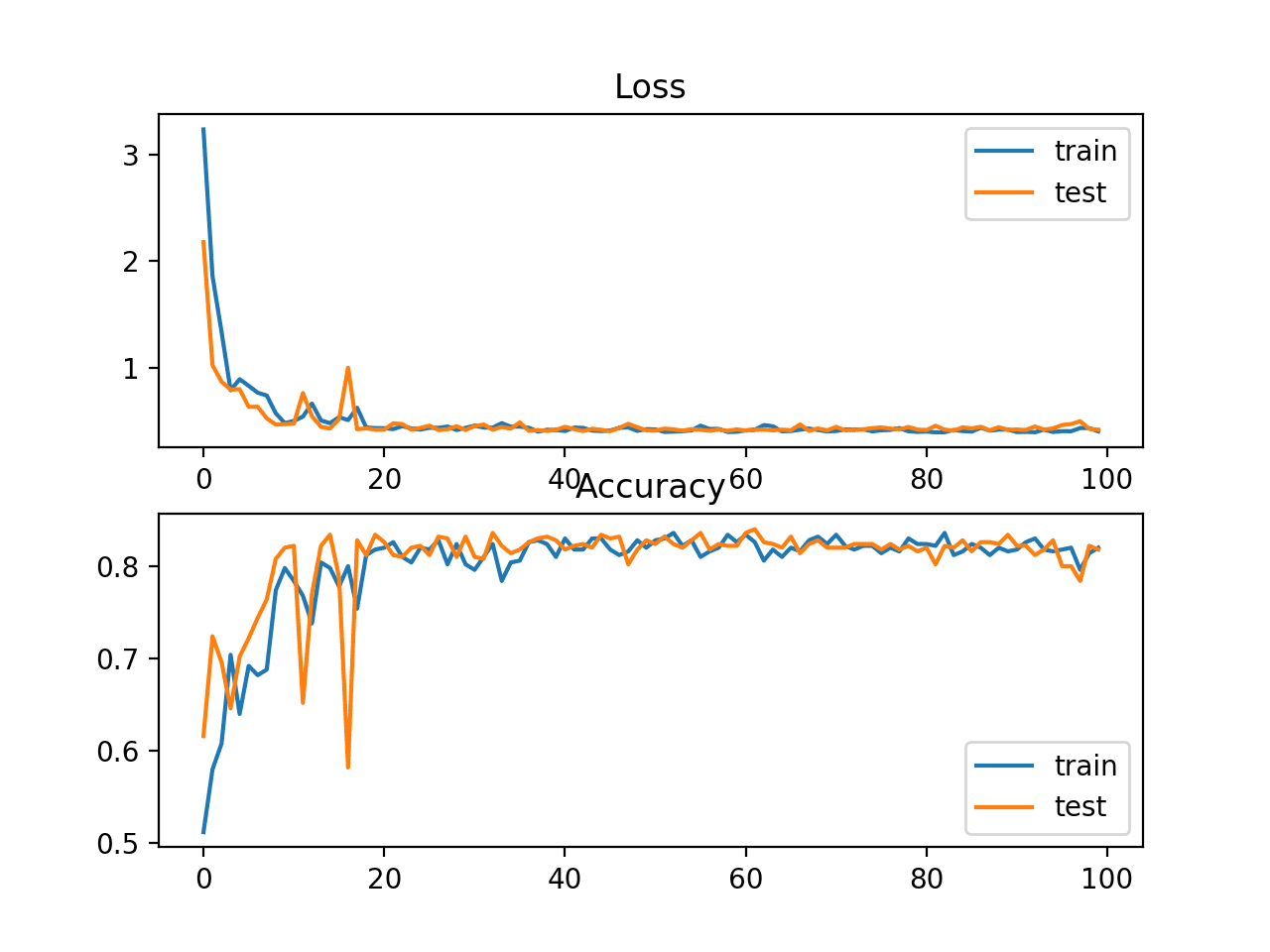

The training process is conducted by using a neural network with the stochastic gradient descent technique. In this paper, we propose a new stacking algorith m that defines the cross-entropy as the loss function for the classification problem. If you dont know then Y is the predicted probability of a given data point belonging to a particular class/label. Log-loss: Now we will move to the machine learning section. Shengli Wu, School of Computer Science, Jiangsu University, Zhenjiang, China.Ībstract: Stacking is one of the major types of ensemble learning techniques in which a set of base classifiers contributes their outputs to the meta-level classifier, and the meta-level classifier combines them so as to produce more accurate classifications. K-L divergence is equal to the difference between cross-entropy and entropy. | School of Computing, Ulster University, Newtownabbey, UKĬorresponding author. This StatQuest gives you and overview of. | School of Mathematics and Information Science, Weifang University, Weifang, China When a Neural Network is used for classification, we usually evaluate how well it fits the data with Cross Entropy. Authors: Ding, Weimin a b | Wu, Shengli a c *Īffiliations: School of Computer Science, Jiangsu University, Zhenjiang, China

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed